Star Ratings Are Broken — Here's the Data

As you suspected, crowdsourced ratings are not useful.

Was there ever a time when 5-star ratings were actually useful? We've all been burned by terrible 4.8+ rated places, found amazing 4.2s, and are often frustrated by just how many 4.5s there are.

It must be very frustrating for restaurant owners too. They know that a) people use tools like Google Maps, Yelp, Trip Advisor, OpenTable, etc. to choose where to dine all the time and simultaneously b) that the ratings on those sites are mostly garbage.

My father has owned and operated restaurants for most of his career and if you want to see him angry, just ask him how he feels about these platforms -- especially the ones that shakedown business owners on a regular basis in exchange for not highlighting the negative reviews (yes, this happens and is core to the business model for some of these platforms).

I've never been a big contributor to review sites, but I am a big consumer of them; especially while travelling with kids. I'm grateful that so many people do contribute and share their thoughts on them because, even knowing how questionable the overall star ratings are, it is still usually the best information available to find a place to eat (or stay, or shop, etc.). However... 'usually the best information' is well below the bar of what would actually be useful. The problems are fairly obvious:

a) People like different things. The reviewers might love things you hate or vice versa.

b) There are a lot of variables in play. Sometimes servers have off nights, sometimes you get seated next to a really loud group, sometimes it's raining and the outdoor patio gets soaked. You might be looking for a place with good food, but are sorting by an avg. rating that factors in all of these things which have nothing to do with the food.

c) There is selection bias. Who are the people that leave so many reviews? Why do they do it? Do they tend to only do it when something bad happens? Or something really good? Who knows?

d) Many reviews are fake. Seriously, more than you think. This is a real problem and it's getting worse fast with AI. Sometimes it's the restaurants themselves buying/making reviews, but apparently it's often third parties doing it for various nefarious reasons that have nothing to do with the restaurant (google this, it's fascinating!).

I'm sure I'm leaving some stuff out, but you get the point. These ratings are rolling up so many different things that their aggregate is largely useless. Of course there are some heuristics you can use; like low avg. rating with lots of reviews is probably a credible negative signal. I used to think that really high (e.g., 4.8+) ratings with high volume of reviews was a meaningful positive signal, but I've learned that rule doesn't hold up as well (looking at you Lisbon...).

You can't trust the overall rating, but the text in the reviews is a different thing. We travelled for 3 months with two young kids in countries where food wasn't always American kid friendly. We spent a LOT of time reading reviews. We developed strategies like:

This took a long time and was not fun when eating out 2-3 meals a day. It did generally work though. By reading the full text of the reviews, you can start to pull apart the noise in the rating signal -- you can get a sense of what people were actually reacting to (e.g., "the food was amazing, but they told me my dog would have to leave if it kept barking" -- 2 stars), you can see what looks fake, you can zero in on the aspects that you actually care about. As long as you are willing to spend the time, this works much better than just trusting the overall rating.

If only there was a way to get the benefit of reading all that review text without actually having to read it all yourself. That's what I wanted, and it's why I spent a year building Seemor. It turns out LLMs are really good at reading lots of text and pulling out signals.

How Seemor works

We use AI to read all the reviews and determine what they are actually saying rather than trusting the rating, and we flag restaurants where the review patterns don't match the underlying quality.

Specifically, Seemor reads real customer reviews for each restaurant and scores it across 35 specific qualities -- things like food quality, consistency (will your experience on a Tuesday be as good as on Saturday), how innovative the cooking is, whether locals eat there or mostly tourists, service, value, noise level, and about 30 others. Each quality gets a score from 1 to 10. We combine those into a letter grade from A+ to F. An A means it's genuinely excellent. A C means it's mediocre. An F means something has gone meaningfully wrong.

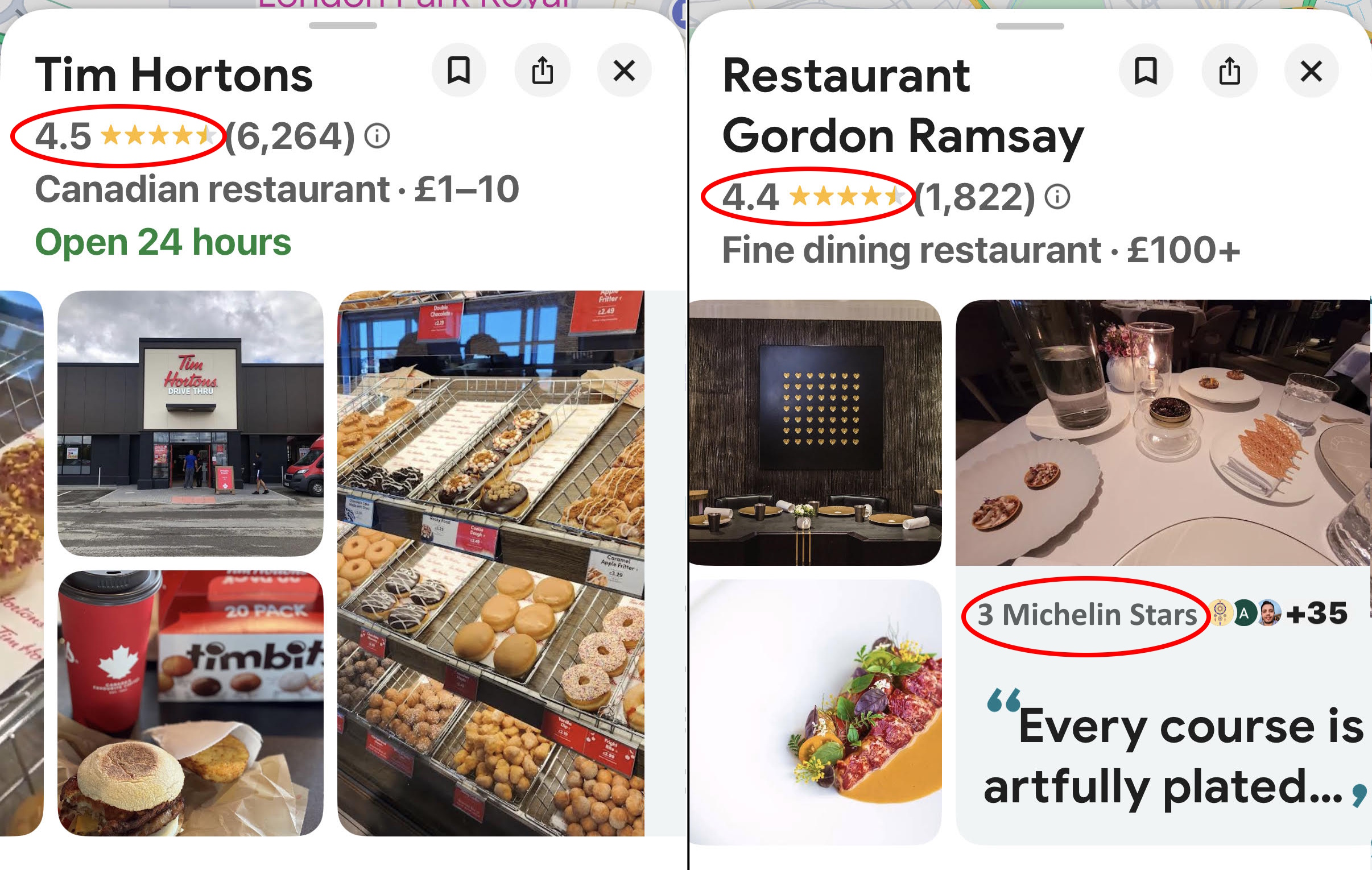

Here's what that looks like for Tim Hortons, the same Tim Hortons that Google rates higher than a three-Michelin-star restaurant:

How the numbers stack up

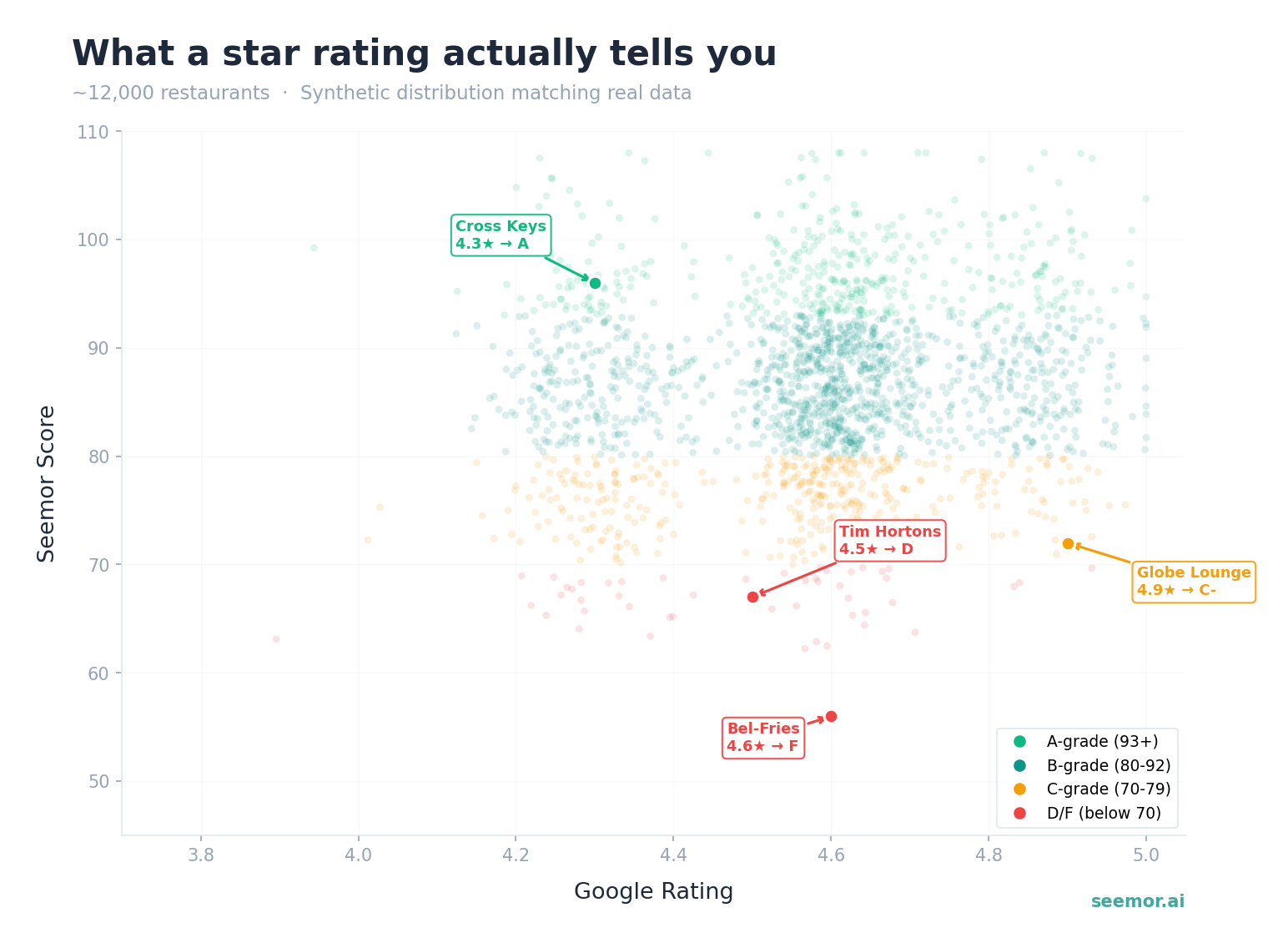

We compared our grades against Google's star ratings across more than 24,000 restaurants in 35 cities.

Let's take a close look at the 4.5-4.7 star range since that's a pretty common area you might be considering. It's essentially a coin flip. Only 26% of those restaurants earn A-grades from Seemor. And 8% -- that's 829 restaurants -- are ones Google rates highly that we'd call mediocre (C grade or worse - Seemor can be vicious).

| Google Stars | Restaurants | A-grades | C or Worse |

|---|---|---|---|

| 4.8+ | 3,518 | 1,407 (40%) | 212 (6.0%) |

| 4.5-4.7 | 10,294 | 2,654 (26%) | 829 (8.1%) |

| 4.2-4.4 | 3,818 | 517 (14%) | 431 (11.3%) |

Higher stars are better places on average, but the spread at every level is enormous, so regardless of the star rating, you are effectively rolling the dice.

Some of the worst offenders

Bel-Fries Fast Food, New York. 4.6 stars, 969 reviews. We gave it an F -- food quality 5.2 out of 10. It's a fry shop on the Lower East Side that Google rates the same as some of the best restaurants in the city. Seemor's analysis: "Inconsistent fry quality, ranging from crispy to frozen-tasting and undercooked. Add-on pricing and small portions reduce perceived value." We also flagged review authenticity concerns.

Globe Lounge, London. 4.9 stars, 1,162 reviews -- basically a perfect score on Google. We gave it a C-. Food quality 6.3. Google categorizes it as a "Restaurant" serving "familiar meals, including stir fry dishes." It's actually a shisha lounge. The 4.9 is for the shisha, not the food, but Google doesn't make that distinction. You'd only figure that out by spending time reading a bunch of reviews, or by going there for stir fry and finding out the hard way.

Diner 24, New York. 4.8 stars, 5,987 reviews. We gave it a C-. Food quality 6.2. Seemor's take: "Inconsistent food execution, particularly with mains. The experience works best for breakfast, fries, and shakes rather than premium items." We also flagged review patterns. Nearly six thousand reviews and Google still can't tell you to stick to the milkshakes. The owner, meanwhile, responds to critical reviews with lines like "here is my problem with your BS" and "your money is no good here." A 4.8-star rating hides all of this.

Meanwhile, Google is burying some really good restaurants.

The Cross Keys, Chelsea. 4.3 stars on Google. We gave it an A -- food quality 8.2 out of 10. Seemor's take: "Exceptional food quality and outstanding service, anchored by a beautifully restored historic setting. Creative British gastropub execution and genuine hospitality from named staff elevate it among the region's finest neighborhood restaurants." Google ranks it below Tim Hortons.

Cafe Murano, Covent Garden. 4.3 stars. We gave it an A -- food quality 8.3. Angela Hartnett's acclaimed Italian. Seemor's take: "Exceptional food quality anchored by standout pasta and desserts, paired with outstanding service attentiveness and staff knowledge. Consistent execution across repeat visits positions it among Covent Garden's finest." Basically invisible on Google.

El Pastor, Soho. 4.3 stars. We gave it an A -- food quality 8.2. One of London's best taquerias. Seemor's take: "Exceptional food quality and outstanding service, with staff who genuinely enhance the experience. The vibrant Soho location and creative cocktail program add appeal." It gets 4.3 stars because some people complained about the wait. Google treats a complaint about a 10-minute wait the same as a complaint about undercooked food. Those aren't the same thing.

Even 5.0 stars doesn't mean what you think

We found 208 restaurants with a perfect 5.0 rating and 50+ reviews. Less than half are genuinely good -- only 44% earn A- or better from Seemor. Even at a perfect score, our grades span from A+ to C+.

Some of the weakest are experience venues: board game cafes, cannabis lounges, niche concept places. They earn perfect Google scores for vibe and community, not food. Others are actual restaurants where a perfect score hides real issues. Bottega 35 in Kensington has a 5.0 rating on Google. We gave it a B-. Seemor's take: "Food ranges from excellent pastas and seafood to inconsistent mains and pricing quirks." A perfect star rating, but the kitchen is inconsistent. You'd only know that by reading a lot of reviews.

Why I think this matters

We gave Restaurant Gordon Ramsay, his three-Michelin-star flagship in Chelsea, an A-. Seemor tells you exactly why it's not a full A: "inconsistent execution on select dishes and tight seating." You can decide whether those things matter to you. A 4.4-star rating gives you nothing to decide with.

That's really the point. No one needs to be told where to eat. They need enough information to decide for themselves. The data is all out there, there is just way too much of it and it's a mess. That's what we built Seemor to do.

Based on 24,000+ restaurants across 35 cities in 7 countries.

Explore restaurants mentioned in this article:

See what Seemor finds for you

Honest grades, personal scores, and 35+ dimensions of quality across 12 cities.

Try Seemor free